Why Snowflake? The Architecture That Changes Everything

Snowflake’s core advantage is simple: it separates compute from storage. You scale each independently, pay only for what you use, and avoid the over-provisioning trap that makes legacy warehouses expensive. Add multi-cloud support (AWS, Azure, GCP), native data sharing, zero-copy cloning, and Time Travel for point-in-time queries, and you have a platform designed for the way modern businesses actually work.

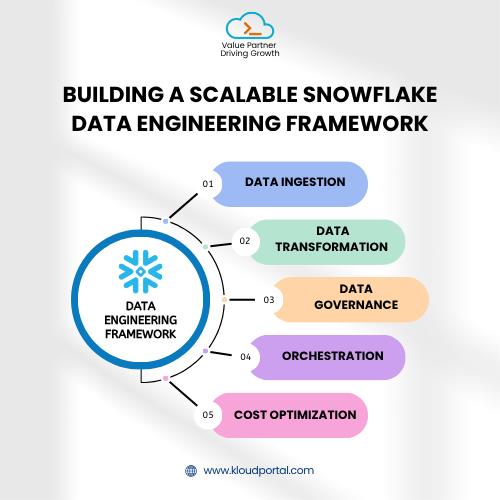

Building an End-to-End Snowflake Data Engineering Strategy

A real Snowflake strategy covers five interconnected layers:

Data Ingestion — Get the Right Data in

Use Snowpipe or COPY INTO for batch loads, Kafka connectors for real-time streams, and ELT tools like Fivetran or Airbyte for SaaS sources. The principle: ingest raw first, transform later. This ELT approach preserves data fidelity and gives your team flexibility.

Data Transformation — DBT is the Standard

DBT (data build tool) has become the industry default for Snowflake transformations. Write modular SQL, version-control your logic, and run automated data quality tests. A well-structured transformation layer moves data through three zones: Staging (clean raw data) → Intermediate (business logic applied) → Mart (analytics-ready datasets).

Data Governance — Trust at Scale

Snowflake’s native governance tools — column-level security, dynamic data masking, row access policies, and the Horizon data catalog — let you enforce access controls without bolting on external tools. Notably, Snowflake’s Data Trends 2024 report found that enterprises doubled their use of governance features and increased their consumption of governed data by nearly 150%. Governance isn’t optional at scale; it’s what makes scale sustainable.

Orchestration — Keep Pipelines Reliable

Tools like Apache Airflow, Prefect, or dbt Cloud handle scheduling and sequencing. Every pipeline run should be observable, recoverable, and alerting to failure. Build this early — retrofitting observability on a mature pipeline is painful and expensive.

Cost Optimization — Scale Smart

Snowflake’s consumption model rewards discipline. Right-size virtual warehouses, set auto-suspend policies, profile expensive queries, apply clustering keys to large tables, and lean on Snowflake’s 24-hour result cache wherever possible.

Real-World Use Case: Retail Analytics at Scale

A mid-size e-commerce retailer had 15+ data sources — Shopify, Salesforce, Google Analytics, custom inventory systems — stitched together with CSV exports and aging on-prem infrastructure. Reporting that required 6 hours to generate. After moving to a Snowflake-first architecture with Fivetran for ingestion, dbt for transformation, and Airflow for orchestration:

- End-of-day reports went from 6 hours to under 4 minutes

- Inventory optimization moved from monthly to daily cycles

- Row-level security ensured regional managers saw only their data

That’s not a technology upgrade. That’s a business transformation.

Designing for AI from Day One

Snowflake has evolved beyond being just a data warehouse; it is now an AI Data Cloud. With Snowflake Cortex, teams can run tasks powered by large language models (LLMs) such as summarization, classification, and sentiment analysis directly on their data, without transferring it to an external provider.

If you are developing AI-powered products such as recommender systems, churn prediction models, or demand forecasts, designing your Snowflake environment to accommodate AI workloads from the very beginning will help you avoid a difficult retrofit later.

Where KloudPortal Fits into Your Snowflake Journey

Designing and executing a Snowflake data engineering strategy at scale is not a task that can be completed over a weekend. It requires deep expertise in various areas, including data ingestion, transformation, governance, orchestration, and cost management — often all at once.

KloudPortal brings practical Snowflake implementation experience to assist businesses in architecting solutions that are both technically sound and strategically aligned. Whether you are starting from scratch with a new Snowflake buildout or migrating from legacy data warehouses, our team understands how to transform raw data into real business value, without the trial-and-error pitfalls that usually slow down these initiatives.

If you’re considering a Snowflake-first strategy or looking to enhance your existing implementation, partner with the experts.

Conclusion

An end-to-end Snowflake data engineering strategy gives you exactly that: reliable ingestion, governed transformation, and AI-ready activation at scale.

KloudPortal brings hands-on Snowflake implementation experience — from greenfield builds to legacy migrations helping businesses move from raw data to real business value, faster and with fewer wrong turns.

If you’re ready to build a Snowflake strategy that grows with your business, let’s talk!

Frequently Asked Questions

1. What is Snowflake data engineering?

2. How does Snowflake differ from traditional data warehouses?

3. What tools work best with Snowflake for data engineering?

The core stack: dbt (transformation), Fivetran or Airbyte (ingestion), Airflow or Dagster (orchestration), and Tableau or Looker (BI). For AI workloads, Snowflake Cortex and Snowpark keep everything within the platform without data egress.